A Healthcare Revolution or Hype?

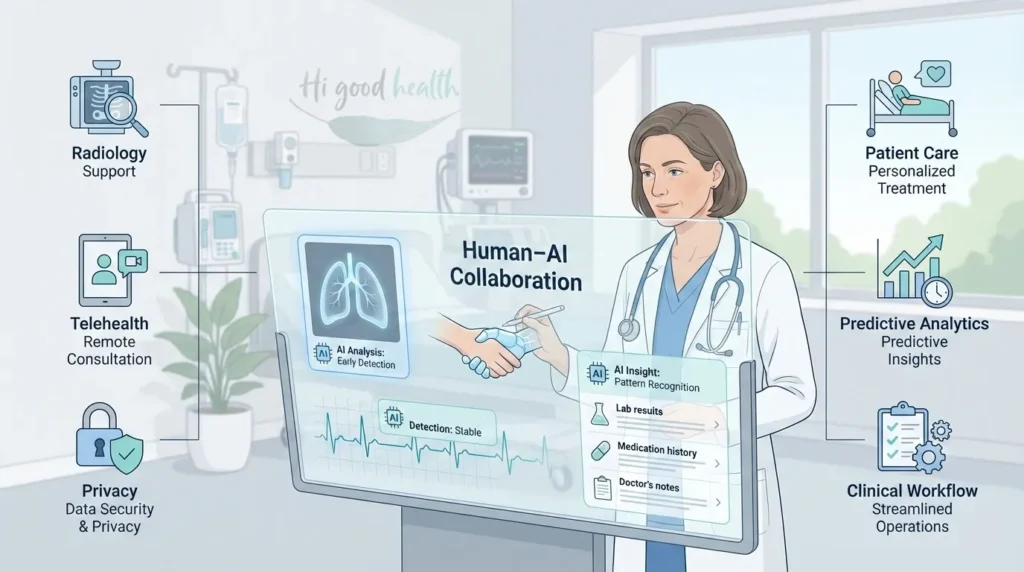

In 2026, artificial intelligence (AI) is no longer a futuristic concept in American healthcare – it is here, shaping everything from hospital workflows to personal wellness apps. Algorithms now help radiologists detect cancer, predict cardiac events, and even draft patient records. Telehealth platforms deploy AI-powered chatbots to triage patients before they ever speak with a doctor.

Supporters call AI the most transformative leap in medicine since antibiotics, promising faster, more accurate, and more affordable care. Critics warn of bias, privacy breaches, and an erosion of the human touch in healing. For patients, the stakes are high: AI has the potential to save lives, but if used without proper oversight, it can also contribute to serious errors.

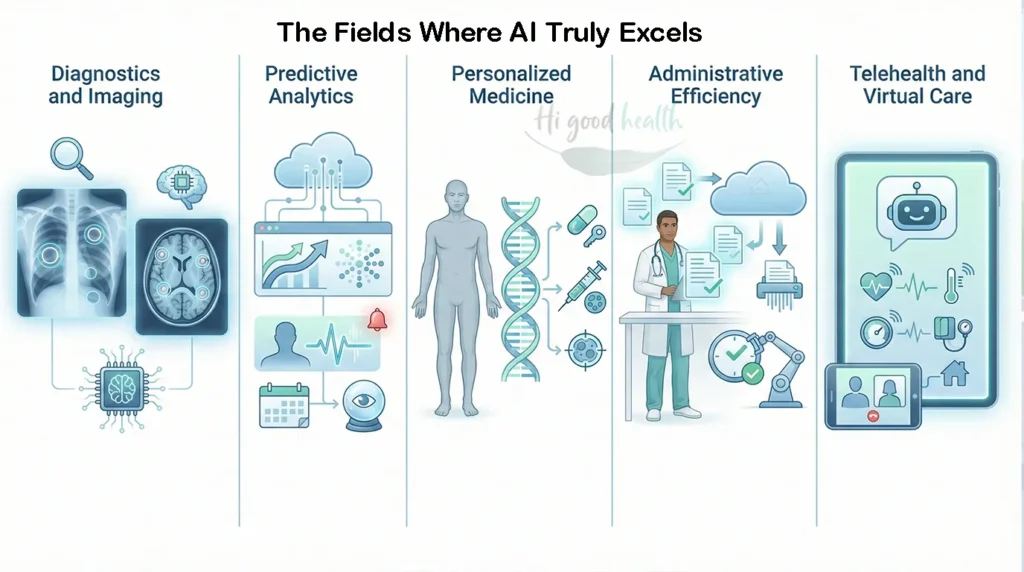

Where AI Shows Real Promise

1. Diagnostics & Imaging

Radiology: AI tools analyze X-rays, CT scans, and MRIs for tumors, fractures, and lung disease.

FDA approvals: As of 2026, dozens of AI-powered diagnostic tools are cleared for US use (see FDA’s AI-enabled medical devices database:

FDA recent approval for Aidoc’s comprehensive triage solution:

Accuracy: In some research settings, AI systems for breast and lung imaging have matched or, in certain cases, modestly exceeded radiologists’ performance for specific tasks – when used as assistive tools rather than stand-alone replacements (National Cancer Institute: https://www.cancer.gov/research/infrastructure/artificial-intelligence.

2. Predictive Analytics

- AI models crunch data from EHRs (electronic health records) to flag patients at risk of heart failure, sepsis, or readmission.

- Hospitals use predictive dashboards to allocate resources and prevent ER overcrowding.

- These systems help clinicians prioritize care but should always be combined with medical judgment.

To learn how AI powered Wearable Diagnostic Devices may help in reducing frequent tests, check our detailed [blog] Are frequent blood & other diagnostic tests really necessary

3. Personalized Medicine

- AI tailors treatments based on genetic profiles.

- Oncology uses machine learning to match patients with the most effective targeted therapies.

- This approach, often called “precision medicine,” aims to find the right treatment for each individual patient.

4. Administrative Efficiency

- AI reduces paperwork by generating visit summaries and insurance codes.

- Doctors reclaim time for patients, reducing burnout.

- Early studies show up to 25% reduction in administrative tasks at hospitals using AI documentation tools.

5. Telehealth & Virtual Care

- AI chatbots triage symptoms before a telemedicine consult.

- Remote monitoring tools alert clinicians when vitals deviate from safe ranges.

Important note: These tools should never replace in-person assessment when symptoms are severe, persistent, or worrying.

To learn more about AI-powered blood pressure monitoring, check our detailed blog The Best Cuffless Blood Pressure Monitors of 2026 – Are These a Game Changer

Case Studies: Americans & AI-Driven Care

Case 1: Lisa, 48, California

Her mammogram flagged “normal,” but an AI tool detected subtle anomalies. A biopsy confirmed early-stage breast cancer, caught months earlier than a human eye alone might have. Treatment was successful.

Case 2: James, 62, Florida

With congestive heart failure, James wore a patch that transmitted real-time data. AI algorithms predicted a potential flare-up and alerted his doctor, preventing hospitalization.

Case 3: Maria, 35, New York

When Maria logged into her insurer’s app for abdominal pain, an AI chatbot suggested “acid reflux.” But her condition worsened. At the ER, she was diagnosed with appendicitis. The delayed triage could have resulted in serious complications if she had not sought further medical care.

Case studies are illustrative examples based on documented AI capabilities and risks, not actual patient cases

The Pitfalls Patients Face

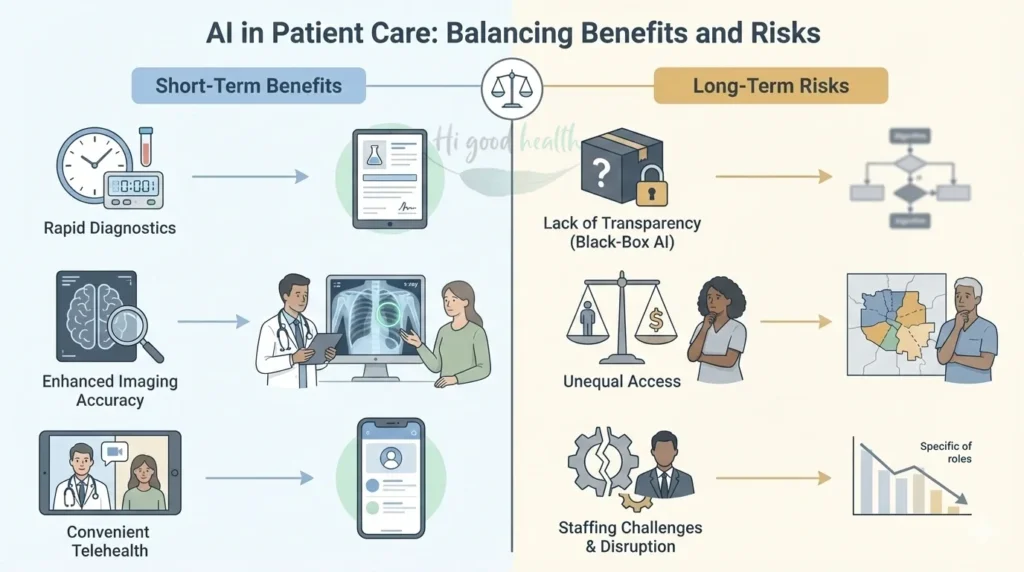

1. Bias & Inequity

- AI trained on biased datasets may produce inaccurate results for certain groups.

- Example: Algorithms predicting kidney function have been shown to underestimate disease severity in Black patients, potentially delaying necessary treatment (Journal of Medical Internet Research: https://www.jmir.org/2025/1/e69678.

- The concern: If training data mainly comes from one demographic group, the AI may not work as well for others.

2. Privacy Concerns

- While many healthcare AI systems use de-identified data, re-identification risks still exist.

- Real-world breaches like the 2023 HCA Healthcare hack exposed millions of patient records.

- Patients should ask their providers how their data is protected and who has access to it.

- Important: HIPAA protects patient data in traditional healthcare settings, but gaps exist when technology companies handle de-identified information.

3. Overreliance on Algorithms

- Risk of “automation bias,” where doctors may trust AI recommendations even when they conflict with clinical judgment.

- Research confirms this as a legitimate concern – clinicians may overly trust “black-box” algorithms despite transparency concerns (https://www.jmir.org/2025/1/e69678

- Patients often don’t know when AI is behind their diagnosis, making informed consent challenging.

4. Gaps in Regulation

- The FDA has cleared dozens of AI tools, but oversight is evolving and struggles to keep pace with rapid development.

- There is still no unified national standard that ensures all AI tools are rigorously tested across diverse populations – existing frameworks are still developing.

- This means some AI tools may reach patients before they’ve been thoroughly tested on populations that reflect real-world diversity.

5. Erosion of Human Touch

- Patients value empathy, nuance, and trust – qualities that AI cannot replicate.

- Overuse of chatbots risks alienating vulnerable groups like seniors, non-English speakers, or those with complex conditions.

- Healthcare works best when technology supports, rather than replaces, the human connection between patients and clinicians.

The Economics of AI in US Healthcare

Potential cost savings: Consulting firm Accenture estimated that AI could potentially help reduce U.S. healthcare spending by up to $150 billion annually (https://www.icthealth.org/news/accenture-ai-will-lead-to-150-billion-in-annual-savings-by-2026

by 2026, primarily through administrative automation, improved diagnostics, and more efficient care delivery.

While we’ve reached that target year, the full savings haven’t materialized – though early adopters are seeing 15-30% efficiency gains and 25% administrative cost reductions, suggesting the transformation is underway but slower than initially forecast.

Rising investment: Market analysts estimate that the U.S. healthcare AI market could reach $40-50 billion by 2030 (https://www.marketsandmarkets.com/PressReleases/us-artificial-intelligence-healthcare.asp

, reflecting rapid adoption across hospitals, insurers, and digital health companies.

Impact on patient bills: In some cases, AI-enabled diagnostics and automation may reduce costs for patients. However, advanced AI-driven therapies, robotic systems, and precision medicine tools often come with high price tags, and savings are not always passed on to patients (https://www.aalpha.net/blog/cost-of-implementing-ai-in-healthcare

To know more about advanced AI-driven therapies, check our detailed blog (The Future of Wearable Sleep Tech: Beyond Smartwatches in 2026)

Key concern: If insurers and healthcare systems deploy AI primarily to maximize profits rather than improve access and affordability, patients may see little financial benefit – despite system-wide efficiency gains. This will vary significantly by hospital, insurance plan, and geographic location.

The Science: How AI Actually Works (In Simple Terms)

Understanding the basics helps you ask better questions about your care:

Machine Learning (ML): Think of it as teaching computers to recognize patterns. Just like you might learn to spot storm clouds, ML algorithms “learn” from thousands of scans to spot tumors.

Natural Language Processing (NLP): This helps computers understand medical notes written by doctors. Instead of reading each record manually, AI can quickly find important details across thousands of patient charts.

Generative AI: The newest type – it can write summaries, draft letters to insurance companies, or answer patient questions based on medical knowledge.

The catch: AI is only as good as the data it learns from. If the training data has gaps or errors – “garbage in, garbage out” – the AI will too.

To know more about wearable health tech for Seniors, read our insightful [blog] (Wearable Health Tech for Seniors in 2025 – Hype vs Reality)

Short- vs Long-Term Impacts for Patients

Short-Term Benefits (What You Might Notice Now):

- Faster test results and lab reports

- Fewer missed diagnoses in radiology and imaging

- Easier access to virtual care appointments

- Less waiting time as administrative tasks speed up

Long-Term Risks (What Could Happen Over Time):

- Overdependence on “black-box” systems that patients and even some doctors don’t fully understand

- Widening health gaps if AI tools don’t serve diverse communities equally

- Possible job displacement affecting the availability of healthcare workers in your area

- Loss of the personal connection that makes healthcare feel human

Step-by-Step: How Patients Can Protect Themselves in the Age of AI

1. Ask Questions

- “Was AI used in my diagnosis or treatment plan?”

- “How accurate is this AI tool, and has it been tested on people like me?”

- “How is my health data being stored and protected?”

- You have every right to understand how technology is being used in your care.

2. Know Your Rights

- Under HIPAA (https://www.hhs.gov/hipaa/index.html

- ), patients must be informed if personal health data is shared with third parties.

- Some states (e.g., California) have stricter AI and data privacy laws that give you additional protections.

- You can request copies of your medical records and ask who has accessed them.

3. Balance Convenience with Caution

- Telehealth AI tools are useful for minor issues, but trust your instincts.

- If an AI chatbot’s suggestion doesn’t feel right, seek a second opinion from a licensed clinician.

- Red-flag any persistent, worsening, or severe symptoms – always escalate to a human doctor.

4. Support Transparency

- Choose providers and hospitals that openly discuss their use of AI.

- Ask if they publish performance data showing how well their AI tools work across different patient groups.

- Patient advocacy can push the entire healthcare system toward more responsible AI use.

5. Stay Human-Centered

- Use AI as a tool to enhance care, not as a replacement for the doctor-patient relationship.

- The best outcomes happen when technology assists skilled, empathetic clinicians – not when it makes decisions alone.

What Experts Say

Leading health organizations emphasize cautious, ethical AI adoption:

American Medical Association (AMA): Warns that “equity and transparency must guide AI adoption” (https://www.ama-assn.org/system/files/ama-ai-principles.pdf

) and publishes principles for responsible AI in healthcare.

FDA (2024): Acknowledges gaps in regulation and has pledged “continuous oversight” of adaptive AI tools that learn and change over time.

World Health Organization (WHO): Calls for “responsible AI” (https://www.who.int/publications/i/item/9789240029200

) that prioritizes patient safety, fairness, and human rights in healthcare.

These guidelines exist to protect patients, but enforcement and real-world implementation are still catching up with the technology.

Expanded FAQs

1. Will AI replace my doctor?

No. AI handles tasks like paperwork, data analysis, and scan interpretation, but it cannot replace human empathy, judgment, or the personal connection you have with your doctor. Experts across the medical field emphasize that AI should be a tool to assist doctors, not replace the patient-doctor relationship. Your doctor’s expertise in understanding your unique situation remains irreplaceable.

2. Can AI detect cancer earlier than human doctors?

In certain research studies, AI tools analyzing X-rays, mammograms, and CT scans have matched or, in some cases, exceeded radiologists’ performance for specific tasks – but only when used as an assistive tool. These tools are most effective when they help doctors catch issues they might miss, not when they work alone. AI is best viewed as a “second set of eyes” rather than a replacement for skilled radiologists.

3. Is my health data safe if my doctor uses AI?

Not always. AI systems require massive amounts of data, which increases the risk of privacy breaches and leaks. While laws like HIPAA exist to protect your information, cyberattacks can still expose millions of patient records. Additionally, some AI companies may use de-identified data that could potentially be re-identified. Always ask your healthcare provider how your data is protected, who has access, and whether it’s shared with third parties.

4. Can AI make mistakes in diagnosis?

Yes. AI is not perfect and has made serious diagnostic errors, such as mistaking life-threatening conditions like appendicitis for mild issues like acid reflux. There is also a documented risk of “automation bias,” where doctors may trust AI outputs too much, even when they conflict with clinical judgment. This is why AI should always be used alongside – never instead of – experienced medical professionals.

5. Is medical AI biased against certain groups?

It can be. If algorithms are trained primarily on data from one demographic group (for example, mostly white, male patients), they may produce less accurate results for women, minorities, and other underrepresented groups. Some algorithms have underestimated kidney disease severity in Black patients, leading to delayed treatment. Researchers and regulators are working to address these biases, but the problem persists in many current AI tools.

6. Will using AI lower my medical bills?

Not necessarily. While AI could save the healthcare industry billions of dollars, those savings often don’t reach patients directly. Some AI-driven diagnostics may cost less, but advanced AI-powered therapies and robotic systems can be very expensive. Whether you see lower bills depends on your hospital, insurance plan, and geographic location. It’s important to ask your provider about costs upfront.

7. What should I do if an AI chatbot diagnosis feels wrong?

Trust your instincts and your body. If an AI tool or chatbot suggests your symptoms are mild but you feel worse or something seems off, do not rely solely on the AI assessment. Always seek evaluation from a human doctor, especially for persistent, worsening, or severe symptoms. AI should never be the final word on your health – your own judgment and a clinician’s expertise matter most.

Final Thoughts

AI is transforming American healthcare in 2026, offering unprecedented opportunities to catch disease earlier, streamline care, and personalize treatment. But the risks are real: algorithmic bias, privacy threats, regulatory gaps, and the potential loss of human-centered care.

For patients, the wisest approach is informed balance – embrace AI’s promise where it genuinely helps, but demand transparency, equity, and strong safeguards from providers and policymakers. Ask questions, know your rights, and never let technology replace the human connection at the heart of healing.

The future of healthcare should be tech-assisted, not tech-dominated.

To know more how AI may help in Living Better, Longer, read our insightful [blog] Longevity Lifestyle & “Augmented Biology” – Living Better, Longer

Glossary

AI (Artificial Intelligence): Computer systems designed to mimic human decision-making and problem-solving.

ML (Machine Learning): A type of AI where algorithms learn from data patterns without being explicitly programmed for every scenario.

NLP (Natural Language Processing): AI technology that analyzes and understands human language, such as doctors’ medical notes.

EHR (Electronic Health Records): Digital versions of patients’ medical charts.

HIPAA (Health Insurance Portability and Accountability Act): US federal law that protects patient health information from being disclosed without consent.

Automation Bias: The tendency to trust computer outputs over human judgment, even when the computer may be wrong.

Algorithm: A set of rules or instructions that a computer follows to solve a problem or make a decision.

All reference links valid and accessible on 30 April 2026

FDA – AI in Medical Devices:

U.S. Food and Drug Administration – Artificial Intelligence and Machine Learning in Software as a Medical Device

AMA – Responsible AI Principles:

American Medical Association – Principles for AI in Healthcare

WHO – Ethics & Governance:

World Health Organization – Ethics and Governance of Artificial Intelligence for Health

Accenture – Healthcare AI Economic Impact:

Accenture – Artificial Intelligence: Healthcare’s New Nervous System

NIH/NCI – AI & Cancer Detection:

National Cancer Institute – Artificial Intelligence in Cancer Research

HHS – HIPAA Privacy Rule:

U.S. Department of Health and Human Services – HIPAA for Individuals